The big picture. What artificial intelligence actually is, what it isn't, and why it matters now. No sci-fi, just reality.

Artificial intelligence is software that can perform tasks that normally require human judgment. Recognizing a face in a photo, translating a sentence, recommending a song you might like, flagging a fraudulent transaction. That is AI. Not a robot with feelings, not a digital brain, not a sentient being plotting world domination. Software that makes decisions based on patterns in data.

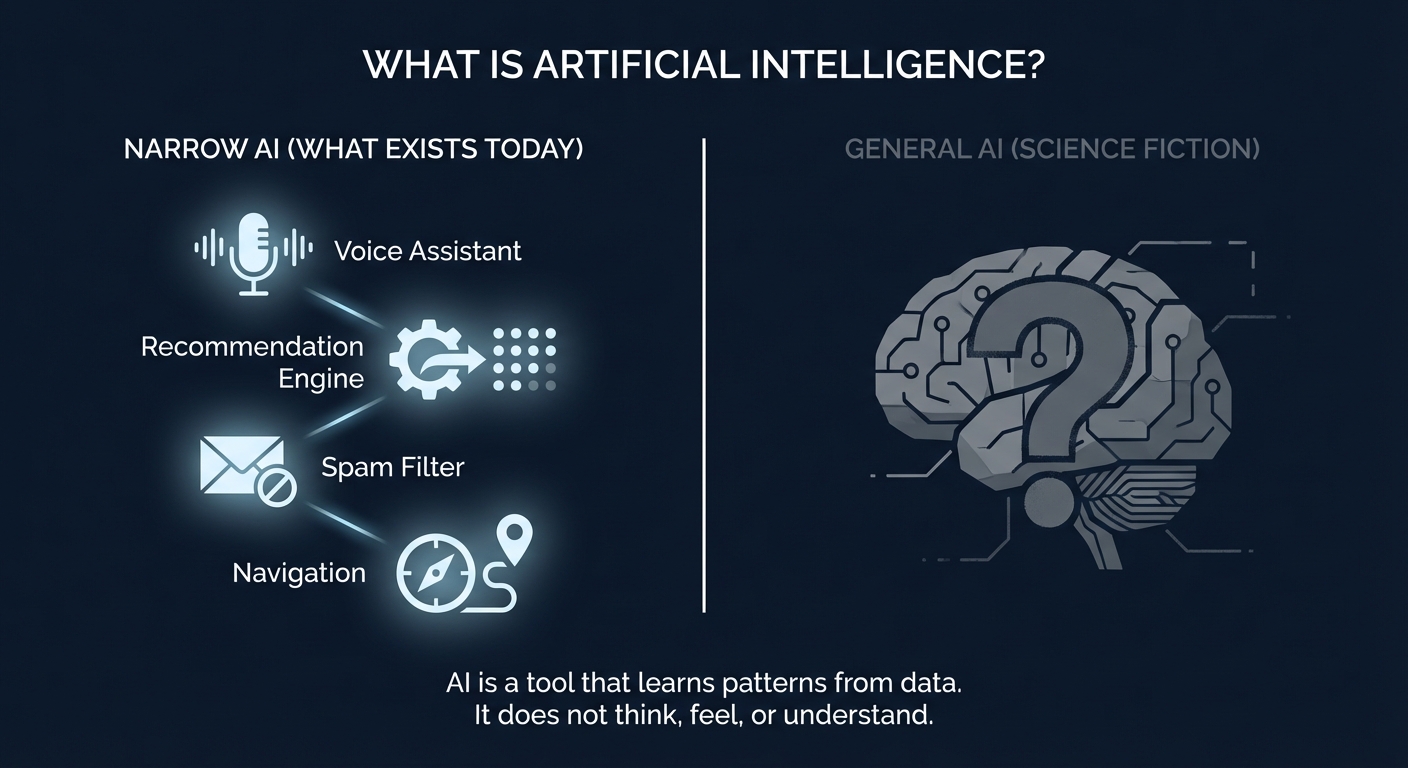

The field broadly splits into two categories. Narrow AI (also called weak AI) does one specific thing well. The spam filter in your email, the voice assistant on your phone, the system that tags your photos. Every AI system you interact with today is narrow AI. It excels at its task and is useless at everything else.

AGI or strong AI) would be a system that can learn and reason across any domain, the way humans do. This does not exist yet. Researchers disagree on whether it is five years away or fifty. What matters for you right now: every AI tool you use is narrow AI, purpose-built for a specific job." data-nl="Algemene AI (soms AGI of sterke AI genoemd) zou een systeem zijn dat kan leren en redeneren over elk domein, zoals mensen dat doen. Dit bestaat nog niet. Onderzoekers zijn het oneens of het vijf jaar of vijftig jaar weg is. Wat voor jou nu telt: elk AI-hulpmiddel dat je gebruikt is narrow AI, doelgericht gebouwd voor een specifieke taak."> General AI (sometimes called AGI or strong AI) would be a system that can learn and reason across any domain, the way humans do. This does not exist yet. Researchers disagree on whether it is five years away or fifty. What matters for you right now: every AI tool you use is narrow AI, purpose-built for a specific job.

Think of it like this: a calculator is extremely good at math but cannot write a poem. Current AI is similar. ChatGPT writes impressive text but cannot drive your car. Google Maps optimizes your route but cannot compose an email. Each system is powerful within its lane.

Hollywood and headlines have created expectations that do not match reality. Before going further, let's clear up the most persistent myths.

AI recognizes patterns in data. It does not understand, feel, or have opinions. When ChatGPT writes a convincing paragraph, it is predicting the most likely next word based on training data. Impressive output, zero comprehension.

AI changes jobs, it does not eliminate them wholesale. The ATM did not kill bank tellers; there are more tellers now than in 1970. AI automates tasks within jobs. People who learn to work with AI become more productive. People who ignore it risk falling behind.

hallucinate, meaning they generate confident-sounding information that is factually wrong. They reflect biases present in their training data. AI is a tool. Like any tool, the output is only as good as the input and the person checking the result." data-nl="AI-systemen hallucineren, wat betekent dat ze zelfverzekerd klinkende informatie genereren die feitelijk onjuist is. Ze weerspiegelen vooroordelen in hun trainingsdata. AI is een hulpmiddel. Zoals elk hulpmiddel is de output slechts zo goed als de input en de persoon die het resultaat controleert."> AI systems hallucinate, meaning they generate confident-sounding information that is factually wrong. They reflect biases present in their training data. AI is a tool. Like any tool, the output is only as good as the input and the person checking the result.

The term 'artificial intelligence' was coined in 1956 at Dartmouth College. Spam filters, recommendation engines, and voice assistants have used AI for over a decade. What changed recently is scale: larger models, more data, and cheaper compute made AI accessible to everyone.

If you have a smartphone, you use AI dozens of times a day without thinking about it. Here are the systems working in the background right now.

natural language processing." data-nl="Siri (2011), Google Assistant (2016), Alexa. Spraakherkenning + natuurlijke taalverwerking."> Siri (2011), Google Assistant (2016), Alexa. Speech recognition + natural language processing.

Algorithms analyze your behavior to predict what you want next." data-nl="Netflix, Spotify, YouTube. Algoritmes analyseren je gedrag om te voorspellen wat je hierna wilt."> Netflix, Spotify, YouTube. Algorithms analyze your behavior to predict what you want next.

machine learning classification." data-nl="Gmail blokkeert 10+ miljoen spam-emails per minuut met machine learning classificatie."> Gmail blocks 10+ million spam emails per minute using machine learning classification.

Google Maps, Waze. Real-time traffic prediction and route optimization powered by AI.

Your bank flags unusual transactions in real-time. AI compares patterns against your history.

Google Photos, Apple Photos. Face recognition and scene detection sort your library automatically.

You do not need to understand the math to use AI effectively. But knowing the basics helps you understand why AI sometimes gives wrong answers, and why some tasks are easy for AI while others are hard.

Most modern AI learns through a process called machine learning. The idea is straightforward: show the system millions of examples, and it finds the patterns itself. Show it a million photos labeled 'cat' and 'not cat', and it learns to distinguish cats. Show it billions of sentences from the internet, and it learns how language works.

This learning process has three ingredients. Training data: the examples the AI learns from. The more diverse and high-quality this data, the better the results. A model: the mathematical structure that stores the patterns. Think of it as a very complex recipe that converts inputs to outputs. Compute: the processing power needed to crunch through all that data. This is why AI breakthroughs are closely tied to advances in computer chips.

The key insight: AI does not 'know' anything. It has learned statistical relationships between pieces of data. When you ask ChatGPT a question, it is not retrieving a stored answer. It is calculating, word by word, what the most probable response looks like based on patterns in its training data. This is why it can sound authoritative while being completely wrong.

transformer architecture, published by Google researchers in 2017). The result was a step change in what AI could do." data-nl="AI-onderzoek loopt al bijna 70 jaar. Dus waarom voelt het alsof alles plotseling veranderde? Omdat drie dingen tegelijkertijd samenkwamen: enorme hoeveelheden data (het internet), krachtige hardware (GPU's oorspronkelijk gebouwd voor gaming), en algoritmische doorbraken (de transformer-architectuur, gepubliceerd door Google-onderzoekers in 2017). Het resultaat was een sprongsgewijze verandering in wat AI kon doen."> AI research has been going on for almost 70 years. So why does it suddenly feel like everything changed? Because three things converged at the same time: massive amounts of data (the internet), powerful hardware (GPUs originally built for gaming), and algorithmic breakthroughs (the transformer architecture, published by Google researchers in 2017). The result was a step change in what AI could do.

Deep Blue defeated Garry Kasparov in a six-game match. Impressive, but this was brute-force calculation, not learning.

The first mainstream voice assistant, built into the iPhone 4S. AI moved from labs to pockets.

AlphaGo defeated Lee Sedol 4-1 in Seoul. Unlike chess, Go has more possible positions than atoms in the universe. Brute force was not enough; the AI had to learn strategy.

Google researchers published 'Attention Is All You Need'. This architecture powers nearly every major AI model today, from GPT to Gemini to Claude.

OpenAI released ChatGPT on November 30, 2022. It reached 1 million users in 5 days and 100 million monthly users within two months. AI went from a tech topic to a dinner-table conversation.

GPT-4 (March 2023), Google Gemini, Anthropic Claude, Meta Llama, Midjourney, Stable Diffusion. AI moved into text, images, code, video, and music. New models launch monthly. Competition drives rapid improvement.

AI is not something that happens to you. It is a tool you can learn to use. The gap between people who use AI effectively and those who do not is widening fast. Not because AI is magic, but because it saves real time on real tasks.

Writing an email draft, summarizing a long document, brainstorming ideas, analyzing a spreadsheet, translating text, generating a first version of a presentation. These are tasks where AI is already good enough to be genuinely useful. Not perfect, but useful. The person who can do their job and also leverage AI for the repetitive parts has a real advantage.

The practical approach: pick one task you do repeatedly. Try doing it with an AI tool. See if the result saves you time. If it does, keep using it. If it does not, try a different tool or a different task. That is it. No grand strategy needed. Just start.

The best way to understand AI is to use it. This wiki is here to give you the context. The rest is practice.